Image Source: Photo by Clint Adair on Unsplash

fledge.io Per App SDWAN – no complexity, high performance, highly secure, lightweight Service Mesh

In the blog on fledge.io Cloud, I had talked about high performance secure app connectivity fledge.io offers. This blog focuses on the service mesh i.e. fledge.io Per App SDWAN.

There are popular service mesh implementations today in the industry with large community following – so do we need a new service mesh? Why did fledge.io develop a new service mesh?

To answer this question, let’s understand what the service mesh does and the complexities and challenges associated with them.

A service mesh is a network fabric that provides connectivity to the application microservices. It provides a framework for service discovery, manages network connectivity between microservices, tracks movement of microservices, applies security policies and monitors connections through analytics.

Some of the challenges with the current service mesh designs include

- Sidecar related inefficiencies – To keep the networking transparent to the microservices, the service mesh logic is generally implemented as a sidecar proxy (a separate container). This means that the network traffic traverses the IP stack up and down multiple times, thereby impacting the performance. In addition, it makes the footprint bulkier too with additional proxy containers.

- Complexity in Security – The service mesh implementations provide security framework based on mutual TLS based authentication. This can be quite complex (e.g. certificate / key management) especially if you have multiple microservices, nodes, locations and clouds. The policy enforcement itself happens in the sidecar proxies, so that is another reason for the need for proxies.

- Kubernetes only option – The service mesh implementations mostly support Kubernetes only environments. While Kubernetes has become the de-facto orchestration framework, as applications start spanning multi-cloud / edge environments, one often tends to encounter disparate environments w.r.t footprint, capabilities and needs. If customers want to use Docker only environment at a given location but Kubernetes otherwise in the application deployment, it’s not easy to have such hybrid scenarios.

- Implementations tend to be reactive – The service mesh implementations have evolved in parallel with the orchestration frameworks as independent efforts. The orchestration frameworks have been designed to allow network, storage etc. subsystems as plugins to be open and offer choice to customers and the community. As a result, orchestration and service mesh run independently but coupled to each other. So service mesh tends to be reactive to the actions of the orchestrator, which can lead to convergence delays.

So what is the fledge.io approach?

fledge.io service mesh acts like a Application SDWAN. fledge.io service mesh is a high performance, highly secure, lightweight implementation for the application across multi-cloud and edge environments without all the complexity.

Here are some of the highlights

- Integrated orchestrator + service mesh – fledge.io service mesh is fully integrated with the orchestrator. It gets provisioned automatically and transparently at the time of application deployment. So any changes to application for e.g. microservice placement or migration, policy changes etc. happen together.

- Highly secure connectivity – All the traffic across the WAN is fully encrypted and secure. There is no need to have any VPN provisioned across the different locations.

- Inline security and policy enforcement based on eBPF – fledge.io service mesh provides built-in inline packet filtering and application firewall capabilities for the application that offers fine grain, just-in-time security, without the need for complex service chaining.

- Per-App service mesh – Each application gets its own service mesh and does not share with any other application, even if they are deployed on the same nodes.

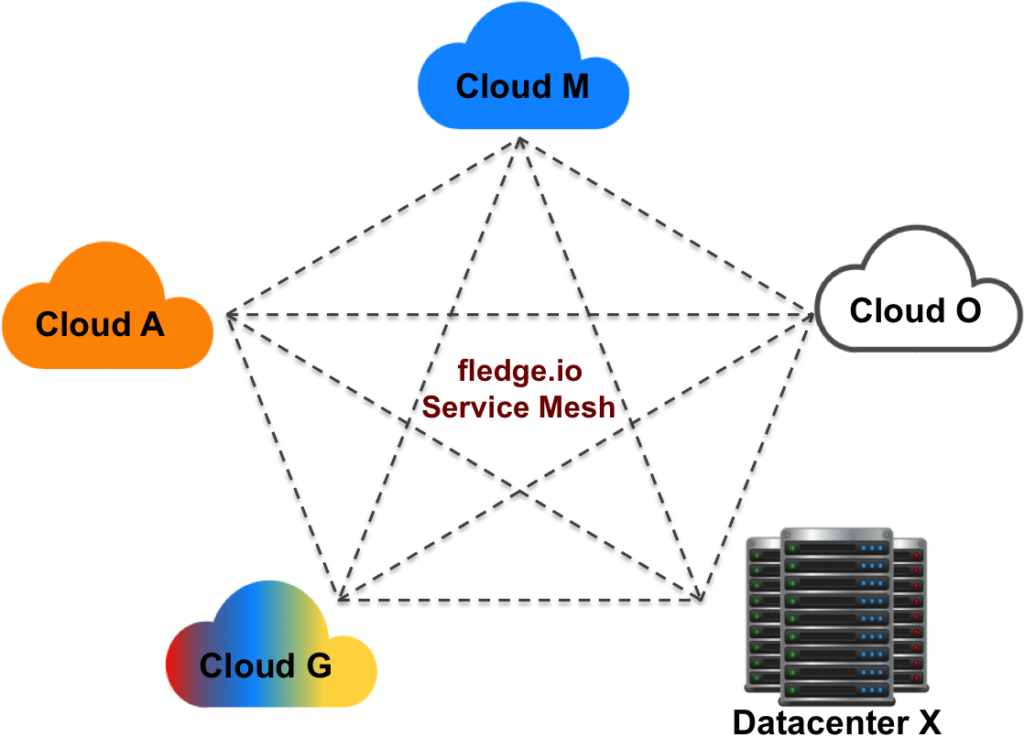

- Any to any connectivity – fledge.io service mesh provides connectivity across any cloud, datacenter and edge environments. The orchestrator and service mesh works with both Kubernetes and Docker-only (non Kubernetes) environments. You could have an application running on a Kubernetes cluster in a cloud that might connect to a small edge node that may be running a couple of Docker containers part of the same application.

- One hop full mesh across WAN – Each location will have a direct per-app VPN connection with every other location within the application span. So it’s full mesh connectivity across all locations.

- Dynamic mesh – Since the service mesh is coupled with fledge.io orchestration, any microservice migrations, canary updates or policy changes will be instantly reflected in the service mesh as well. Migrations of workloads across Kubernetes clusters, across clouds and locations can easily be done without losing connectivity among the application microservices.

- No sidecars – fledge.io service mesh does not need any sidecars. So it avoids the multiple IP stack traversals, making it high performance and efficient.

- Works with CNI of your choice – fledge.io service mesh will work with any CNI of your choice for your intra Kubernetes cluster connectivity.

- Monitoring based on eBPF – The service mesh provides rich monitoring of connectivity, performance and security data for all the connections based on fledge.io eBPF.

- No complexity – There are no complex key management, authentication or configurations that one needs to worry about. Since the service mesh is integrated with the orchestrator, the key distribution happens automatically and securely.

Just define the application and deploy – the above-mentioned capabilities happen in real-time transparently.

Want to see fledge.io service mesh in action? Reach out to info@fledge.io

Pramodh Mallipatna

Founder and CEO

fledge.io